I do not dismiss crises of a mental origin as hardship, but I do think that mortal threat, starvation, disease, and oppression would rank higher on the hardship scale than not being called by your preferred pronouns or not being treated as a woman when you still have a twig and berries.

That the latter being assigned hardship status indicates to me that the first world isn’t that bad a place to be these days – mostly because those things I defined as true hardships most certainly do exist in the world outside our first world protections.

A few years ago, my wife and I went to a sailing school down in Florida, learning to handle monohull and catamarans for a trip we were planning. We stayed aboard the boat with two other classmates and each night we were treated by the instructors to a shipboard dinner. One night near the midpoint of the course, we were about three bottles of wine into an after-dinner discussion – one of the instructors was a college age guy who liked to lambast Americans for being culturally insensitive. When asked why he thought that was, he said it was because Americans are insulated and don’t really travel outside their comfort zones.

If we just spent time in other cultures, he said, Americans would be less arrogant and more understanding of other people’s struggles. I had a suspicion about what “cultures” he had experienced, and I questioned him about the places he had been – confirming my suspicions that his “extensive travels” had consisted mostly of summer backpacking with his upper-class college pals, staying in youth hostels and drinking his way across Europe and South Asia over the past few years – but never really venturing into the countryside outside the resort and tourist areas.

I pointed out that his cultural “exchanges” were largely with young people of his same economic strata, they were just from across several countries plus the few people who were paid to make sure he had a safe, good time. Other than languages, they were pretty much alike. I didn’t do it in a way that demeaned him, but I made sure to get the point across that his view of the world was formed from extremely limited experiences and mostly based on siloed opinion rather than fact.

I explained that he had not seen the places I had, the oppression of women in Saudi Arabia, the complete lack of humanity in China, the callous disregard of ethnic and religious minorities in the UAE, the tribal conflicts in equatorial Africa, the slums in Bangkok or those outside Rio, or the caste system that still exists in India. I pointed out that my decades of living and working in these areas, exposed to all layers of these societies, revealed to me that there were far worse class and ethnic prejudices in these other countries than would ever be tolerated in America. In some of these nations the discrimination was so deeply embedded, it was codified in their laws.

I explained that study after study has shown that the “poor” in America, when compared to Europe’s middle class, live in larger homes, and have access to more cheap and nutritious food. They have at least one car, a mobile phone, an average of two televisions, cable TV and internet, refrigerators, microwaves, and air conditioning. 80 percent of poor households have air conditioning (in 1970, only 36 percent of the entire U.S. population enjoyed air conditioning) and to top it off, America consistently scores at the top of the least discriminatory nations in the world.

It says a lot that we have so little to complain about that conflict and discord must be invented.

There comes a time when it is more important to be thankful for what we have than angry for what we don’t. There was a time when Americans understood that. I pray that we return to a time when we can stop long enough to appreciate what a great country America still is.

[Tomorrow] we will celebrate the birth of our great nation. It is a time to reflect on the blessings brought forth on this land and shared with the world the creation of America has wrought. In my heart, every day is July 4th, I hope it is for you as well.

As we head to Independence Day and a celebration of this nation’s founding, the angry chorus of haters with idle hands and minds gets loud. They prefer we dwell on the nation’s sins and ignore our great progress toward an always more perfect union. No longer just angry academics and activists, the press too has joined the act. It is a reminder the secular religion that dominates cultural institutions is a religion without grace or forgiveness, perpetually anchored in the grievances of the past.

The New York Times produced its 1619 Project to, in the words of its creator, re-tell the story of our founding. She claimed it was not to be taken as true fact, but narration. She recast the United States and its revolution as about the preservation of slavery. Widely criticized by historians across the political perspective, the damage was done and proudly so. Many people who had grievance and needed a story around which to weave their grievance latched on to the false claims.

The fabulists ignored the Northern colonies moving against slavery long before Great Britain did. They ignored the writings of our founders, including Thomas Jefferson, who knew the institution of slavery undermined the words “all men are created equal” and would have to end. They ignored the reparations paid in blood on battlefields across America as white men from the North killed their kin from the South to set slaves free.

Though many people now sign “let us live to make men free” when singing the Battle Hymn of the Republic, Julia Ward Howe’s original language in 1862 during the Civil War read, “As [Christ] died to make men holy, let us die to make men free.” And so they did. The fabulists of American history would now, to prop up their own revenue from twenty-first century grievance, twist those deaths into something else.

Reuters has gotten in on the act. A week before Independence Day, it ran a story tying most living Presidents, two Supreme Court Justices, several Governors, and over 100 legislators to ancestors who owned slaves. Ironically, the only President who did not descend from slave owners is Donald Trump, not Barack Obama.

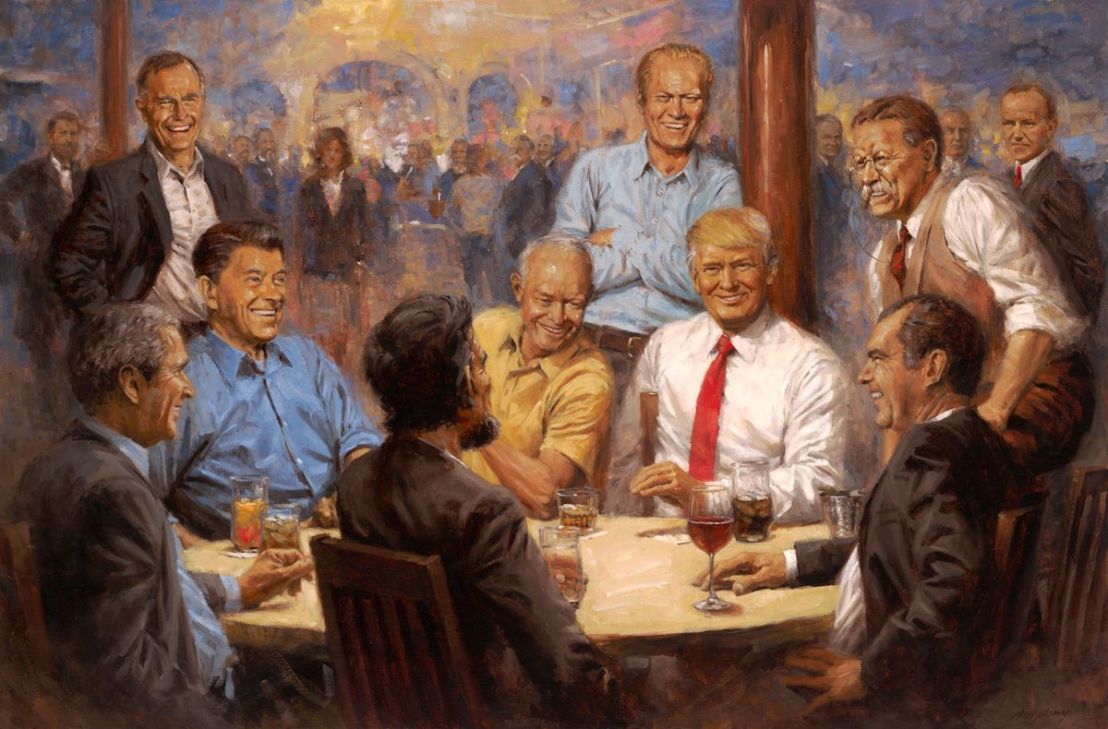

Undoubtedly, Reuters decided to run this piece in the week before Independence Day, as opposed to during Black History Month or Juneteenth, because its progressive editors want to perpetuate the race-based conversation about America’s founding started by the New York Times’s 1619 Project. In the 1960’s, Americans rejected the progressive movement’s “blame America first” ideology, electing Richard Nixon. Then, in 1984, they overwhelmingly re-electing Ronald Reagan after witnessing Democrats convene for their presidential convention in San Francisco with a blame America chorus that lamented all the world’s ills as our fault.

Sadly, now, some on the right have taken up the opposite side of the same coin as the progressives. Increasingly, loud voices on the right ponder the difference between our democratically elected presidents and Vladimir Putin. “How can we say he’s worse?” they wonder. Ironically, many of those on the right who have lost the ability to distinguish between a monster and an American are the same who saw January 6th as no big deal.

This growing strain of progressive anti-Americanism taken up by the right solely because they increasingly see America not as a land of opportunity but as a land of us versus them will be repudiated by the American voter. 2022 could be a harbinger of worst to come if the right descends down the progressive left’s rabbit hole of hating their own country because they do not control the institutions of power.

I say frequently “people are stupid,” but I also never bet against the American public and their wisdom. Progressives and right-wing populists and nationalists intent on rejecting the will of the American voter will be, themselves, rejected. Our Republic rose to defend its ancient freedoms from a monarchy seeking to deny them, then went on to slaughter themselves to end slavery, then rid the world of Nazis. Our American Republic will not suffer fools on the left or the right who cannot tell the difference between our always more perfect union and tyrants. Do not bet against America.